What is The Null Hypothesis & When Do You Reject The Null Hypothesis

Julia Simkus

Editor at Simply Psychology

BA (Hons) Psychology, Princeton University

Julia Simkus is a graduate of Princeton University with a Bachelor of Arts in Psychology. She is currently studying for a Master's Degree in Counseling for Mental Health and Wellness in September 2023. Julia's research has been published in peer reviewed journals.

Learn about our Editorial Process

Saul Mcleod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul Mcleod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

A null hypothesis is a statistical concept suggesting no significant difference or relationship between measured variables. It’s the default assumption unless empirical evidence proves otherwise.

The null hypothesis states no relationship exists between the two variables being studied (i.e., one variable does not affect the other).

The null hypothesis is the statement that a researcher or an investigator wants to disprove.

Testing the null hypothesis can tell you whether your results are due to the effects of manipulating the dependent variable or due to random chance.

How to Write a Null Hypothesis

Null hypotheses (H0) start as research questions that the investigator rephrases as statements indicating no effect or relationship between the independent and dependent variables.

It is a default position that your research aims to challenge or confirm.

For example, if studying the impact of exercise on weight loss, your null hypothesis might be:

There is no significant difference in weight loss between individuals who exercise daily and those who do not.

Examples of Null Hypotheses

| Research Question | Null Hypothesis |

|---|---|

| Do teenagers use cell phones more than adults? | Teenagers and adults use cell phones the same amount. |

| Do tomato plants exhibit a higher rate of growth when planted in compost rather than in soil? | Tomato plants show no difference in growth rates when planted in compost rather than soil. |

| Does daily meditation decrease the incidence of depression? | Daily meditation does not decrease the incidence of depression. |

| Does daily exercise increase test performance? | There is no relationship between daily exercise time and test performance. |

| Does the new vaccine prevent infections? | The vaccine does not affect the infection rate. |

| Does flossing your teeth affect the number of cavities? | Flossing your teeth has no effect on the number of cavities. |

When Do We Reject The Null Hypothesis?

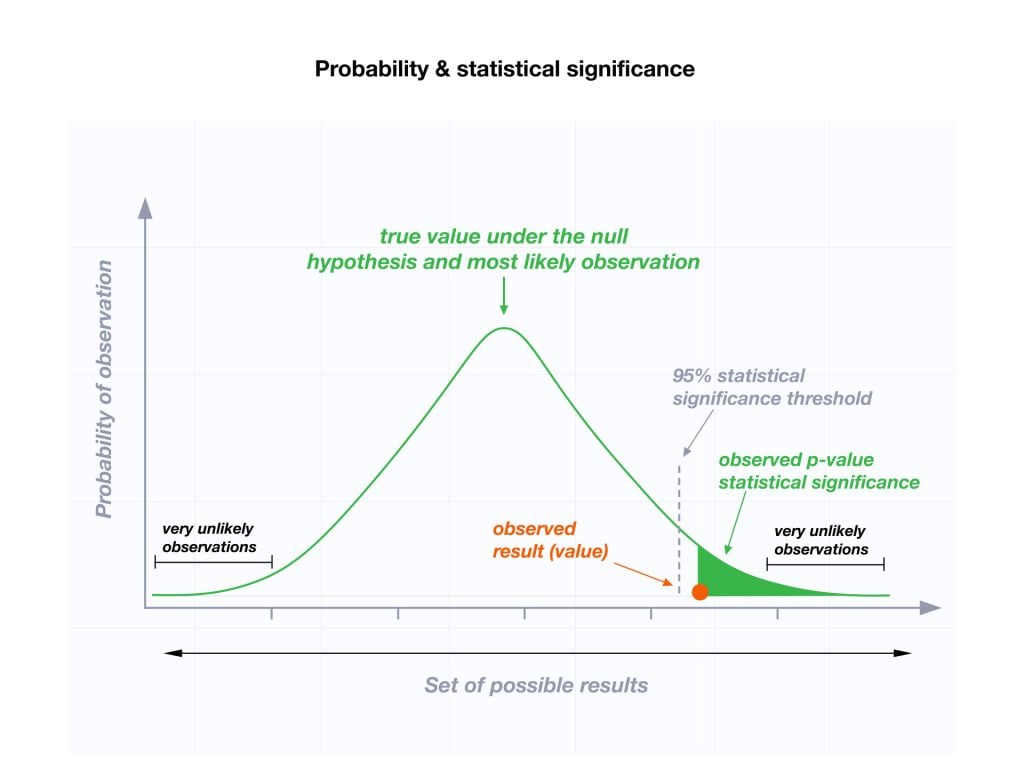

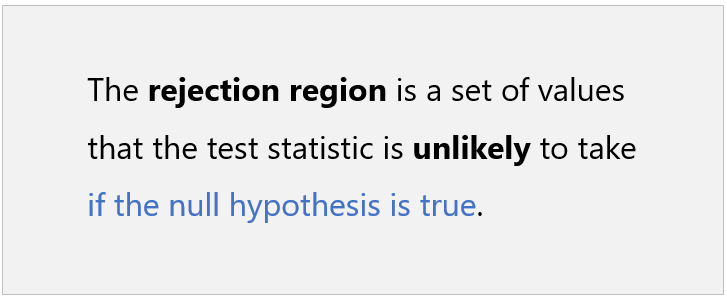

We reject the null hypothesis when the data provide strong enough evidence to conclude that it is likely incorrect. This often occurs when the p-value (probability of observing the data given the null hypothesis is true) is below a predetermined significance level.

If the collected data does not meet the expectation of the null hypothesis, a researcher can conclude that the data lacks sufficient evidence to back up the null hypothesis, and thus the null hypothesis is rejected.

Rejecting the null hypothesis means that a relationship does exist between a set of variables and the effect is statistically significant ( p > 0.05).

If the data collected from the random sample is not statistically significance , then the null hypothesis will be accepted, and the researchers can conclude that there is no relationship between the variables.

You need to perform a statistical test on your data in order to evaluate how consistent it is with the null hypothesis. A p-value is one statistical measurement used to validate a hypothesis against observed data.

Calculating the p-value is a critical part of null-hypothesis significance testing because it quantifies how strongly the sample data contradicts the null hypothesis.

The level of statistical significance is often expressed as a p -value between 0 and 1. The smaller the p-value, the stronger the evidence that you should reject the null hypothesis.

Usually, a researcher uses a confidence level of 95% or 99% (p-value of 0.05 or 0.01) as general guidelines to decide if you should reject or keep the null.

When your p-value is less than or equal to your significance level, you reject the null hypothesis.

In other words, smaller p-values are taken as stronger evidence against the null hypothesis. Conversely, when the p-value is greater than your significance level, you fail to reject the null hypothesis.

In this case, the sample data provides insufficient data to conclude that the effect exists in the population.

Because you can never know with complete certainty whether there is an effect in the population, your inferences about a population will sometimes be incorrect.

When you incorrectly reject the null hypothesis, it’s called a type I error. When you incorrectly fail to reject it, it’s called a type II error.

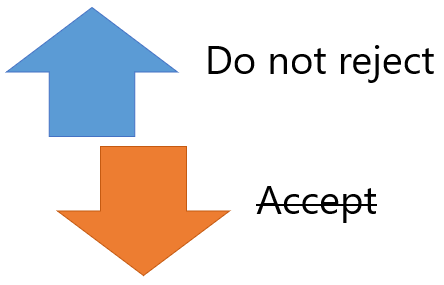

Why Do We Never Accept The Null Hypothesis?

The reason we do not say “accept the null” is because we are always assuming the null hypothesis is true and then conducting a study to see if there is evidence against it. And, even if we don’t find evidence against it, a null hypothesis is not accepted.

A lack of evidence only means that you haven’t proven that something exists. It does not prove that something doesn’t exist.

It is risky to conclude that the null hypothesis is true merely because we did not find evidence to reject it. It is always possible that researchers elsewhere have disproved the null hypothesis, so we cannot accept it as true, but instead, we state that we failed to reject the null.

One can either reject the null hypothesis, or fail to reject it, but can never accept it.

Why Do We Use The Null Hypothesis?

We can never prove with 100% certainty that a hypothesis is true; We can only collect evidence that supports a theory. However, testing a hypothesis can set the stage for rejecting or accepting this hypothesis within a certain confidence level.

The null hypothesis is useful because it can tell us whether the results of our study are due to random chance or the manipulation of a variable (with a certain level of confidence).

A null hypothesis is rejected if the measured data is significantly unlikely to have occurred and a null hypothesis is accepted if the observed outcome is consistent with the position held by the null hypothesis.

Rejecting the null hypothesis sets the stage for further experimentation to see if a relationship between two variables exists.

Hypothesis testing is a critical part of the scientific method as it helps decide whether the results of a research study support a particular theory about a given population. Hypothesis testing is a systematic way of backing up researchers’ predictions with statistical analysis.

It helps provide sufficient statistical evidence that either favors or rejects a certain hypothesis about the population parameter.

Purpose of a Null Hypothesis

- The primary purpose of the null hypothesis is to disprove an assumption.

- Whether rejected or accepted, the null hypothesis can help further progress a theory in many scientific cases.

- A null hypothesis can be used to ascertain how consistent the outcomes of multiple studies are.

Do you always need both a Null Hypothesis and an Alternative Hypothesis?

The null (H0) and alternative (Ha or H1) hypotheses are two competing claims that describe the effect of the independent variable on the dependent variable. They are mutually exclusive, which means that only one of the two hypotheses can be true.

While the null hypothesis states that there is no effect in the population, an alternative hypothesis states that there is statistical significance between two variables.

The goal of hypothesis testing is to make inferences about a population based on a sample. In order to undertake hypothesis testing, you must express your research hypothesis as a null and alternative hypothesis. Both hypotheses are required to cover every possible outcome of the study.

What is the difference between a null hypothesis and an alternative hypothesis?

The alternative hypothesis is the complement to the null hypothesis. The null hypothesis states that there is no effect or no relationship between variables, while the alternative hypothesis claims that there is an effect or relationship in the population.

It is the claim that you expect or hope will be true. The null hypothesis and the alternative hypothesis are always mutually exclusive, meaning that only one can be true at a time.

What are some problems with the null hypothesis?

One major problem with the null hypothesis is that researchers typically will assume that accepting the null is a failure of the experiment. However, accepting or rejecting any hypothesis is a positive result. Even if the null is not refuted, the researchers will still learn something new.

Why can a null hypothesis not be accepted?

We can either reject or fail to reject a null hypothesis, but never accept it. If your test fails to detect an effect, this is not proof that the effect doesn’t exist. It just means that your sample did not have enough evidence to conclude that it exists.

We can’t accept a null hypothesis because a lack of evidence does not prove something that does not exist. Instead, we fail to reject it.

Failing to reject the null indicates that the sample did not provide sufficient enough evidence to conclude that an effect exists.

If the p-value is greater than the significance level, then you fail to reject the null hypothesis.

Is a null hypothesis directional or non-directional?

A hypothesis test can either contain an alternative directional hypothesis or a non-directional alternative hypothesis. A directional hypothesis is one that contains the less than (“<“) or greater than (“>”) sign.

A nondirectional hypothesis contains the not equal sign (“≠”). However, a null hypothesis is neither directional nor non-directional.

A null hypothesis is a prediction that there will be no change, relationship, or difference between two variables.

The directional hypothesis or nondirectional hypothesis would then be considered alternative hypotheses to the null hypothesis.

Gill, J. (1999). The insignificance of null hypothesis significance testing. Political research quarterly , 52 (3), 647-674.

Krueger, J. (2001). Null hypothesis significance testing: On the survival of a flawed method. American Psychologist , 56 (1), 16.

Masson, M. E. (2011). A tutorial on a practical Bayesian alternative to null-hypothesis significance testing. Behavior research methods , 43 , 679-690.

Nickerson, R. S. (2000). Null hypothesis significance testing: a review of an old and continuing controversy. Psychological methods , 5 (2), 241.

Rozeboom, W. W. (1960). The fallacy of the null-hypothesis significance test. Psychological bulletin , 57 (5), 416.

Related Articles

Research Methodology

Mixed Methods Research

Conversation Analysis

Discourse Analysis

Phenomenology In Qualitative Research

Ethnography In Qualitative Research

Narrative Analysis In Qualitative Research

When Do You Reject the Null Hypothesis? (3 Examples)

A hypothesis test is a formal statistical test we use to reject or fail to reject a statistical hypothesis.

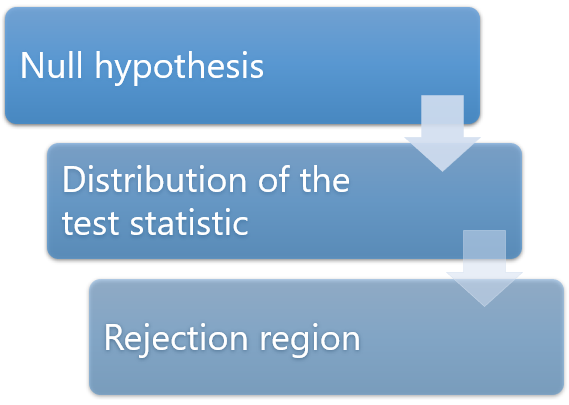

We always use the following steps to perform a hypothesis test:

Step 1: State the null and alternative hypotheses.

The null hypothesis , denoted as H 0 , is the hypothesis that the sample data occurs purely from chance.

The alternative hypothesis , denoted as H A , is the hypothesis that the sample data is influenced by some non-random cause.

2. Determine a significance level to use.

Decide on a significance level. Common choices are .01, .05, and .1.

3. Calculate the test statistic and p-value.

Use the sample data to calculate a test statistic and a corresponding p-value .

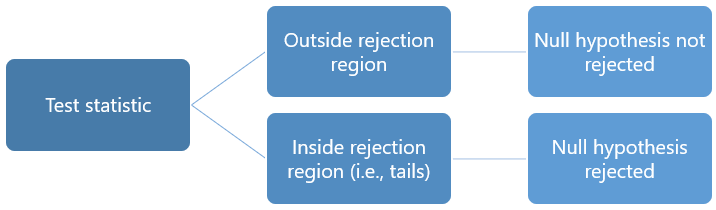

4. Reject or fail to reject the null hypothesis.

If the p-value is less than the significance level, then you reject the null hypothesis.

If the p-value is not less than the significance level, then you fail to reject the null hypothesis.

You can use the following clever line to remember this rule:

“If the p is low, the null must go.”

In other words, if the p-value is low enough then we must reject the null hypothesis.

The following examples show when to reject (or fail to reject) the null hypothesis for the most common types of hypothesis tests.

Example 1: One Sample t-test

A one sample t-test is used to test whether or not the mean of a population is equal to some value.

For example, suppose we want to know whether or not the mean weight of a certain species of turtle is equal to 310 pounds.

We go out and collect a simple random sample of 40 turtles with the following information:

- Sample size n = 40

- Sample mean weight x = 300

- Sample standard deviation s = 18.5

We can use the following steps to perform a one sample t-test:

Step 1: State the Null and Alternative Hypotheses

We will perform the one sample t-test with the following hypotheses:

- H 0 : μ = 310 (population mean is equal to 310 pounds)

- H A : μ ≠ 310 (population mean is not equal to 310 pounds)

We will choose to use a significance level of 0.05 .

We can plug in the numbers for the sample size, sample mean, and sample standard deviation into this One Sample t-test Calculator to calculate the test statistic and p-value:

- t test statistic: -3.4187

- two-tailed p-value: 0.0015

Since the p-value (0.0015) is less than the significance level (0.05) we reject the null hypothesis .

We conclude that there is sufficient evidence to say that the mean weight of turtles in this population is not equal to 310 pounds.

Example 2: Two Sample t-test

A two sample t-test is used to test whether or not two population means are equal.

For example, suppose we want to know whether or not the mean weight between two different species of turtles is equal.

We go out and collect a simple random sample from each population with the following information:

- Sample size n 1 = 40

- Sample mean weight x 1 = 300

- Sample standard deviation s 1 = 18.5

- Sample size n 2 = 38

- Sample mean weight x 2 = 305

- Sample standard deviation s 2 = 16.7

We can use the following steps to perform a two sample t-test:

We will perform the two sample t-test with the following hypotheses:

- H 0 : μ 1 = μ 2 (the two population means are equal)

- H 1 : μ 1 ≠ μ 2 (the two population means are not equal)

We will choose to use a significance level of 0.10 .

We can plug in the numbers for the sample sizes, sample means, and sample standard deviations into this Two Sample t-test Calculator to calculate the test statistic and p-value:

- t test statistic: -1.2508

- two-tailed p-value: 0.2149

Since the p-value (0.2149) is not less than the significance level (0.10) we fail to reject the null hypothesis .

We do not have sufficient evidence to say that the mean weight of turtles between these two populations is different.

Example 3: Paired Samples t-test

A paired samples t-test is used to compare the means of two samples when each observation in one sample can be paired with an observation in the other sample.

For example, suppose we want to know whether or not a certain training program is able to increase the max vertical jump of college basketball players.

To test this, we may recruit a simple random sample of 20 college basketball players and measure each of their max vertical jumps. Then, we may have each player use the training program for one month and then measure their max vertical jump again at the end of the month:

We can use the following steps to perform a paired samples t-test:

We will perform the paired samples t-test with the following hypotheses:

- H 0 : μ before = μ after (the two population means are equal)

- H 1 : μ before ≠ μ after (the two population means are not equal)

We will choose to use a significance level of 0.01 .

We can plug in the raw data for each sample into this Paired Samples t-test Calculator to calculate the test statistic and p-value:

- t test statistic: -3.226

- two-tailed p-value: 0.0045

Since the p-value (0.0045) is less than the significance level (0.01) we reject the null hypothesis .

We have sufficient evidence to say that the mean vertical jump before and after participating in the training program is not equal.

Bonus: Decision Rule Calculator

You can use this decision rule calculator to automatically determine whether you should reject or fail to reject a null hypothesis for a hypothesis test based on the value of the test statistic.

Featured Posts

Hey there. My name is Zach Bobbitt. I have a Masters of Science degree in Applied Statistics and I’ve worked on machine learning algorithms for professional businesses in both healthcare and retail. I’m passionate about statistics, machine learning, and data visualization and I created Statology to be a resource for both students and teachers alike. My goal with this site is to help you learn statistics through using simple terms, plenty of real-world examples, and helpful illustrations.

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

Join the Statology Community

Sign up to receive Statology's exclusive study resource: 100 practice problems with step-by-step solutions. Plus, get our latest insights, tutorials, and data analysis tips straight to your inbox!

By subscribing you accept Statology's Privacy Policy.

Hypothesis Testing (cont...)

Hypothesis testing, the null and alternative hypothesis.

In order to undertake hypothesis testing you need to express your research hypothesis as a null and alternative hypothesis. The null hypothesis and alternative hypothesis are statements regarding the differences or effects that occur in the population. You will use your sample to test which statement (i.e., the null hypothesis or alternative hypothesis) is most likely (although technically, you test the evidence against the null hypothesis). So, with respect to our teaching example, the null and alternative hypothesis will reflect statements about all statistics students on graduate management courses.

The null hypothesis is essentially the "devil's advocate" position. That is, it assumes that whatever you are trying to prove did not happen ( hint: it usually states that something equals zero). For example, the two different teaching methods did not result in different exam performances (i.e., zero difference). Another example might be that there is no relationship between anxiety and athletic performance (i.e., the slope is zero). The alternative hypothesis states the opposite and is usually the hypothesis you are trying to prove (e.g., the two different teaching methods did result in different exam performances). Initially, you can state these hypotheses in more general terms (e.g., using terms like "effect", "relationship", etc.), as shown below for the teaching methods example:

| Null Hypotheses (H ): | Undertaking seminar classes has no effect on students' performance. |

| Alternative Hypothesis (H ): | Undertaking seminar class has a positive effect on students' performance. |

Depending on how you want to "summarize" the exam performances will determine how you might want to write a more specific null and alternative hypothesis. For example, you could compare the mean exam performance of each group (i.e., the "seminar" group and the "lectures-only" group). This is what we will demonstrate here, but other options include comparing the distributions , medians , amongst other things. As such, we can state:

| Null Hypotheses (H ): | The mean exam mark for the "seminar" and "lecture-only" teaching methods is the same in the population. |

| Alternative Hypothesis (H ): | The mean exam mark for the "seminar" and "lecture-only" teaching methods is not the same in the population. |

Now that you have identified the null and alternative hypotheses, you need to find evidence and develop a strategy for declaring your "support" for either the null or alternative hypothesis. We can do this using some statistical theory and some arbitrary cut-off points. Both these issues are dealt with next.

Significance levels

The level of statistical significance is often expressed as the so-called p -value . Depending on the statistical test you have chosen, you will calculate a probability (i.e., the p -value) of observing your sample results (or more extreme) given that the null hypothesis is true . Another way of phrasing this is to consider the probability that a difference in a mean score (or other statistic) could have arisen based on the assumption that there really is no difference. Let us consider this statement with respect to our example where we are interested in the difference in mean exam performance between two different teaching methods. If there really is no difference between the two teaching methods in the population (i.e., given that the null hypothesis is true), how likely would it be to see a difference in the mean exam performance between the two teaching methods as large as (or larger than) that which has been observed in your sample?

So, you might get a p -value such as 0.03 (i.e., p = .03). This means that there is a 3% chance of finding a difference as large as (or larger than) the one in your study given that the null hypothesis is true. However, you want to know whether this is "statistically significant". Typically, if there was a 5% or less chance (5 times in 100 or less) that the difference in the mean exam performance between the two teaching methods (or whatever statistic you are using) is as different as observed given the null hypothesis is true, you would reject the null hypothesis and accept the alternative hypothesis. Alternately, if the chance was greater than 5% (5 times in 100 or more), you would fail to reject the null hypothesis and would not accept the alternative hypothesis. As such, in this example where p = .03, we would reject the null hypothesis and accept the alternative hypothesis. We reject it because at a significance level of 0.03 (i.e., less than a 5% chance), the result we obtained could happen too frequently for us to be confident that it was the two teaching methods that had an effect on exam performance.

Whilst there is relatively little justification why a significance level of 0.05 is used rather than 0.01 or 0.10, for example, it is widely used in academic research. However, if you want to be particularly confident in your results, you can set a more stringent level of 0.01 (a 1% chance or less; 1 in 100 chance or less).

One- and two-tailed predictions

When considering whether we reject the null hypothesis and accept the alternative hypothesis, we need to consider the direction of the alternative hypothesis statement. For example, the alternative hypothesis that was stated earlier is:

| Alternative Hypothesis (H ): | Undertaking seminar classes has a positive effect on students' performance. |

The alternative hypothesis tells us two things. First, what predictions did we make about the effect of the independent variable(s) on the dependent variable(s)? Second, what was the predicted direction of this effect? Let's use our example to highlight these two points.

Sarah predicted that her teaching method (independent variable: teaching method), whereby she not only required her students to attend lectures, but also seminars, would have a positive effect (that is, increased) students' performance (dependent variable: exam marks). If an alternative hypothesis has a direction (and this is how you want to test it), the hypothesis is one-tailed. That is, it predicts direction of the effect. If the alternative hypothesis has stated that the effect was expected to be negative, this is also a one-tailed hypothesis.

Alternatively, a two-tailed prediction means that we do not make a choice over the direction that the effect of the experiment takes. Rather, it simply implies that the effect could be negative or positive. If Sarah had made a two-tailed prediction, the alternative hypothesis might have been:

| Alternative Hypothesis (H ): | Undertaking seminar classes has an effect on students' performance. |

In other words, we simply take out the word "positive", which implies the direction of our effect. In our example, making a two-tailed prediction may seem strange. After all, it would be logical to expect that "extra" tuition (going to seminar classes as well as lectures) would either have a positive effect on students' performance or no effect at all, but certainly not a negative effect. However, this is just our opinion (and hope) and certainly does not mean that we will get the effect we expect. Generally speaking, making a one-tail prediction (i.e., and testing for it this way) is frowned upon as it usually reflects the hope of a researcher rather than any certainty that it will happen. Notable exceptions to this rule are when there is only one possible way in which a change could occur. This can happen, for example, when biological activity/presence in measured. That is, a protein might be "dormant" and the stimulus you are using can only possibly "wake it up" (i.e., it cannot possibly reduce the activity of a "dormant" protein). In addition, for some statistical tests, one-tailed tests are not possible.

Rejecting or failing to reject the null hypothesis

Let's return finally to the question of whether we reject or fail to reject the null hypothesis.

If our statistical analysis shows that the significance level is below the cut-off value we have set (e.g., either 0.05 or 0.01), we reject the null hypothesis and accept the alternative hypothesis. Alternatively, if the significance level is above the cut-off value, we fail to reject the null hypothesis and cannot accept the alternative hypothesis. You should note that you cannot accept the null hypothesis, but only find evidence against it.

Accept or Fail to Reject? Understanding Hypothesis Testing

What is hypothesis testing: accept or fail to reject.

Hypothesis testing is a cornerstone of statistical analysis used to infer the properties of a population based on sample data. This method involves setting up two opposing hypotheses—the null hypothesis, which posits no effect or no difference, and the alternative hypothesis, which suggests some effect or difference.

The decision-making process in hypothesis testing hinges on whether the evidence is sufficient to reject the null hypothesis, or if we fail to reject it , implying that the data does not provide strong enough proof to support the alternative. The outcome — rejecting or failing to reject the null hypothesis — helps researchers draw conclusions about the statistical significance and implications of their findings.

Description of Hypothesis Testing

When conducting a hypothesis test, statistical methods are used to decide whether to accept the null hypothesis or fail to reject it based on the data. "Failing to reject" the null hypothesis does not necessarily mean that we accept it as true. Instead, it means that there isn't enough evidence against the null hypothesis; hence, we do not adopt the alternative hypothesis. The decision fundamentally hinges on the calculation of the probability of observing the collected data (or data more extreme) assuming that the null hypothesis is true, which is quantified by the p-value.

- 1. If the p-value is low (typically less than a predetermined threshold like 0.05), it suggests that such data is very unlikely under the null hypothesis. In this case, the null hypothesis is rejected, and the alternative hypothesis is considered more likely.

- 2. If the p-value is high , indicating that the observed data is likely under the null hypothesis, then we fail to reject the null hypothesis. This does not confirm the null hypothesis is true; rather, it suggests that the data does not provide strong evidence against it

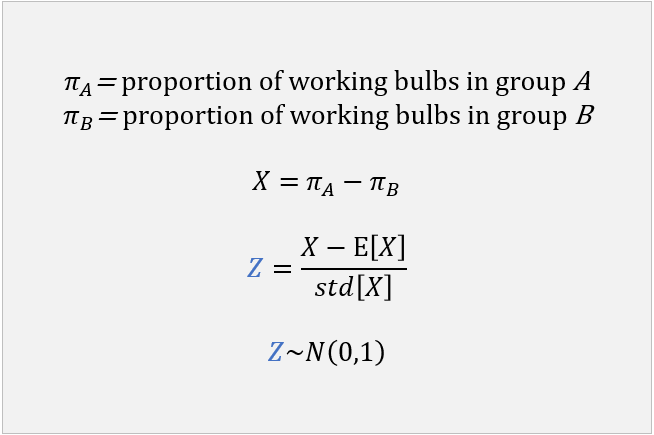

Role of P-Values and Test Statistics

A P-value , or probability value, is a key statistic in hypothesis testing used to measure the strength of the evidence against the null hypothesis. It quantifies the probability of observing the results of a study—or more extreme results—assuming that the null hypothesis is true. It is the probability of observing a test statistic as extreme as, or more extreme than, what is observed if the null hypothesis is true. If this P-value is less than the chosen significance level (often 0.05), then the null hypothesis is rejected in favor of the alternative. If it is greater, we fail to reject the null hypothesis. Test statistics like the t-statistic, z-score, and chi-square are calculated from the data and are used to determine the P-value.Check an example by watching this video:

Critical Values and Decision Making

Critical values are essential components in the framework of hypothesis testing, acting as cutoff points that help decide whether to reject the null hypothesis. The selection of these values is directly tied to the chosen confidence level, which typically might be 90%, 95%, or 99%. This confidence level represents the probability that the confidence interval would contain the true population parameter if you were to repeat the study multiple times.

- Implications of Decision Outcomes

This part looks at the potential impacts of hypothesis testing, including the common errors associated with it and how they affect decisions in research and data analysis.

Impacts of Type I and Type II Errors

Type I errors, also known as false positives, occur when the null hypothesis is incorrectly rejected when it is actually true. This can lead researchers to believe there is an effect when there isn't one.

Type II errors, also known as false negatives, occur when the null hypothesis is not rejected even though it is false. This leads researchers to overlook a genuine effect in the data. Properly understanding and managing these errors is crucial for accurately interpreting hypothesis testing results and avoiding misleading conclusions in research and data analysis.

Learning Hypothesis Testing: Fail, Accept, or Reject with JOVE

JOVE provides an extensive range of visual and interactive materials designed specifically to teach the nuances of hypothesis testing, including how to properly handle the outcomes of failing to reject, accepting, or rejecting hypotheses. Through detailed video tutorials and comprehensive case studies, JOVE helps users grasp complex concepts

Subscribe now for your Free Trial: Hypothesis Accept or Fail to reject

The conclusion reaffirms the importance of mastering hypothesis testing techniques, highlighting their role in making informed statistical decisions in research and professional practices. This wrap-up emphasizes the necessity of a solid grasp of statistical principles for accurate data interpretation and decision-making.

Related Posts

We use cookies to enhance your experience on our website.

By continuing to use our website or clicking “Continue”, you are agreeing to accept our cookies.

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Hypothesis Testing | A Step-by-Step Guide with Easy Examples

Published on November 8, 2019 by Rebecca Bevans . Revised on June 22, 2023.

Hypothesis testing is a formal procedure for investigating our ideas about the world using statistics . It is most often used by scientists to test specific predictions, called hypotheses, that arise from theories.

There are 5 main steps in hypothesis testing:

- State your research hypothesis as a null hypothesis and alternate hypothesis (H o ) and (H a or H 1 ).

- Collect data in a way designed to test the hypothesis.

- Perform an appropriate statistical test .

- Decide whether to reject or fail to reject your null hypothesis.

- Present the findings in your results and discussion section.

Though the specific details might vary, the procedure you will use when testing a hypothesis will always follow some version of these steps.

Table of contents

Step 1: state your null and alternate hypothesis, step 2: collect data, step 3: perform a statistical test, step 4: decide whether to reject or fail to reject your null hypothesis, step 5: present your findings, other interesting articles, frequently asked questions about hypothesis testing.

After developing your initial research hypothesis (the prediction that you want to investigate), it is important to restate it as a null (H o ) and alternate (H a ) hypothesis so that you can test it mathematically.

The alternate hypothesis is usually your initial hypothesis that predicts a relationship between variables. The null hypothesis is a prediction of no relationship between the variables you are interested in.

- H 0 : Men are, on average, not taller than women. H a : Men are, on average, taller than women.

Here's why students love Scribbr's proofreading services

Discover proofreading & editing

For a statistical test to be valid , it is important to perform sampling and collect data in a way that is designed to test your hypothesis. If your data are not representative, then you cannot make statistical inferences about the population you are interested in.

There are a variety of statistical tests available, but they are all based on the comparison of within-group variance (how spread out the data is within a category) versus between-group variance (how different the categories are from one another).

If the between-group variance is large enough that there is little or no overlap between groups, then your statistical test will reflect that by showing a low p -value . This means it is unlikely that the differences between these groups came about by chance.

Alternatively, if there is high within-group variance and low between-group variance, then your statistical test will reflect that with a high p -value. This means it is likely that any difference you measure between groups is due to chance.

Your choice of statistical test will be based on the type of variables and the level of measurement of your collected data .

- an estimate of the difference in average height between the two groups.

- a p -value showing how likely you are to see this difference if the null hypothesis of no difference is true.

Based on the outcome of your statistical test, you will have to decide whether to reject or fail to reject your null hypothesis.

In most cases you will use the p -value generated by your statistical test to guide your decision. And in most cases, your predetermined level of significance for rejecting the null hypothesis will be 0.05 – that is, when there is a less than 5% chance that you would see these results if the null hypothesis were true.

In some cases, researchers choose a more conservative level of significance, such as 0.01 (1%). This minimizes the risk of incorrectly rejecting the null hypothesis ( Type I error ).

Prevent plagiarism. Run a free check.

The results of hypothesis testing will be presented in the results and discussion sections of your research paper , dissertation or thesis .

In the results section you should give a brief summary of the data and a summary of the results of your statistical test (for example, the estimated difference between group means and associated p -value). In the discussion , you can discuss whether your initial hypothesis was supported by your results or not.

In the formal language of hypothesis testing, we talk about rejecting or failing to reject the null hypothesis. You will probably be asked to do this in your statistics assignments.

However, when presenting research results in academic papers we rarely talk this way. Instead, we go back to our alternate hypothesis (in this case, the hypothesis that men are on average taller than women) and state whether the result of our test did or did not support the alternate hypothesis.

If your null hypothesis was rejected, this result is interpreted as “supported the alternate hypothesis.”

These are superficial differences; you can see that they mean the same thing.

You might notice that we don’t say that we reject or fail to reject the alternate hypothesis . This is because hypothesis testing is not designed to prove or disprove anything. It is only designed to test whether a pattern we measure could have arisen spuriously, or by chance.

If we reject the null hypothesis based on our research (i.e., we find that it is unlikely that the pattern arose by chance), then we can say our test lends support to our hypothesis . But if the pattern does not pass our decision rule, meaning that it could have arisen by chance, then we say the test is inconsistent with our hypothesis .

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Normal distribution

- Descriptive statistics

- Measures of central tendency

- Correlation coefficient

Methodology

- Cluster sampling

- Stratified sampling

- Types of interviews

- Cohort study

- Thematic analysis

Research bias

- Implicit bias

- Cognitive bias

- Survivorship bias

- Availability heuristic

- Nonresponse bias

- Regression to the mean

Hypothesis testing is a formal procedure for investigating our ideas about the world using statistics. It is used by scientists to test specific predictions, called hypotheses , by calculating how likely it is that a pattern or relationship between variables could have arisen by chance.

A hypothesis states your predictions about what your research will find. It is a tentative answer to your research question that has not yet been tested. For some research projects, you might have to write several hypotheses that address different aspects of your research question.

A hypothesis is not just a guess — it should be based on existing theories and knowledge. It also has to be testable, which means you can support or refute it through scientific research methods (such as experiments, observations and statistical analysis of data).

Null and alternative hypotheses are used in statistical hypothesis testing . The null hypothesis of a test always predicts no effect or no relationship between variables, while the alternative hypothesis states your research prediction of an effect or relationship.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Bevans, R. (2023, June 22). Hypothesis Testing | A Step-by-Step Guide with Easy Examples. Scribbr. Retrieved June 24, 2024, from https://www.scribbr.com/statistics/hypothesis-testing/

Is this article helpful?

Rebecca Bevans

Other students also liked, choosing the right statistical test | types & examples, understanding p values | definition and examples, what is your plagiarism score.

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

13.1 Understanding Null Hypothesis Testing

Learning objectives.

- Explain the purpose of null hypothesis testing, including the role of sampling error.

- Describe the basic logic of null hypothesis testing.

- Describe the role of relationship strength and sample size in determining statistical significance and make reasonable judgments about statistical significance based on these two factors.

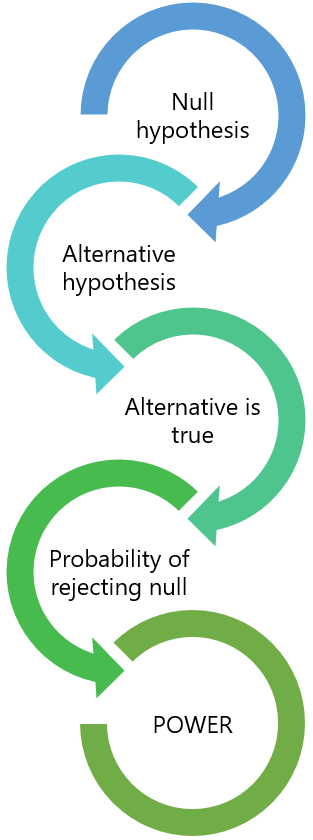

The Purpose of Null Hypothesis Testing

As we have seen, psychological research typically involves measuring one or more variables for a sample and computing descriptive statistics for that sample. In general, however, the researcher’s goal is not to draw conclusions about that sample but to draw conclusions about the population that the sample was selected from. Thus researchers must use sample statistics to draw conclusions about the corresponding values in the population. These corresponding values in the population are called parameters . Imagine, for example, that a researcher measures the number of depressive symptoms exhibited by each of 50 clinically depressed adults and computes the mean number of symptoms. The researcher probably wants to use this sample statistic (the mean number of symptoms for the sample) to draw conclusions about the corresponding population parameter (the mean number of symptoms for clinically depressed adults).

Unfortunately, sample statistics are not perfect estimates of their corresponding population parameters. This is because there is a certain amount of random variability in any statistic from sample to sample. The mean number of depressive symptoms might be 8.73 in one sample of clinically depressed adults, 6.45 in a second sample, and 9.44 in a third—even though these samples are selected randomly from the same population. Similarly, the correlation (Pearson’s r ) between two variables might be +.24 in one sample, −.04 in a second sample, and +.15 in a third—again, even though these samples are selected randomly from the same population. This random variability in a statistic from sample to sample is called sampling error . (Note that the term error here refers to random variability and does not imply that anyone has made a mistake. No one “commits a sampling error.”)

One implication of this is that when there is a statistical relationship in a sample, it is not always clear that there is a statistical relationship in the population. A small difference between two group means in a sample might indicate that there is a small difference between the two group means in the population. But it could also be that there is no difference between the means in the population and that the difference in the sample is just a matter of sampling error. Similarly, a Pearson’s r value of −.29 in a sample might mean that there is a negative relationship in the population. But it could also be that there is no relationship in the population and that the relationship in the sample is just a matter of sampling error.

In fact, any statistical relationship in a sample can be interpreted in two ways:

- There is a relationship in the population, and the relationship in the sample reflects this.

- There is no relationship in the population, and the relationship in the sample reflects only sampling error.

The purpose of null hypothesis testing is simply to help researchers decide between these two interpretations.

The Logic of Null Hypothesis Testing

Null hypothesis testing is a formal approach to deciding between two interpretations of a statistical relationship in a sample. One interpretation is called the null hypothesis (often symbolized H 0 and read as “H-naught”). This is the idea that there is no relationship in the population and that the relationship in the sample reflects only sampling error. Informally, the null hypothesis is that the sample relationship “occurred by chance.” The other interpretation is called the alternative hypothesis (often symbolized as H 1 ). This is the idea that there is a relationship in the population and that the relationship in the sample reflects this relationship in the population.

Again, every statistical relationship in a sample can be interpreted in either of these two ways: It might have occurred by chance, or it might reflect a relationship in the population. So researchers need a way to decide between them. Although there are many specific null hypothesis testing techniques, they are all based on the same general logic. The steps are as follows:

- Assume for the moment that the null hypothesis is true. There is no relationship between the variables in the population.

- Determine how likely the sample relationship would be if the null hypothesis were true.

- If the sample relationship would be extremely unlikely, then reject the null hypothesis in favor of the alternative hypothesis. If it would not be extremely unlikely, then retain the null hypothesis .

Following this logic, we can begin to understand why Mehl and his colleagues concluded that there is no difference in talkativeness between women and men in the population. In essence, they asked the following question: “If there were no difference in the population, how likely is it that we would find a small difference of d = 0.06 in our sample?” Their answer to this question was that this sample relationship would be fairly likely if the null hypothesis were true. Therefore, they retained the null hypothesis—concluding that there is no evidence of a sex difference in the population. We can also see why Kanner and his colleagues concluded that there is a correlation between hassles and symptoms in the population. They asked, “If the null hypothesis were true, how likely is it that we would find a strong correlation of +.60 in our sample?” Their answer to this question was that this sample relationship would be fairly unlikely if the null hypothesis were true. Therefore, they rejected the null hypothesis in favor of the alternative hypothesis—concluding that there is a positive correlation between these variables in the population.

A crucial step in null hypothesis testing is finding the likelihood of the sample result if the null hypothesis were true. This probability is called the p value . A low p value means that the sample result would be unlikely if the null hypothesis were true and leads to the rejection of the null hypothesis. A high p value means that the sample result would be likely if the null hypothesis were true and leads to the retention of the null hypothesis. But how low must the p value be before the sample result is considered unlikely enough to reject the null hypothesis? In null hypothesis testing, this criterion is called α (alpha) and is almost always set to .05. If there is less than a 5% chance of a result as extreme as the sample result if the null hypothesis were true, then the null hypothesis is rejected. When this happens, the result is said to be statistically significant . If there is greater than a 5% chance of a result as extreme as the sample result when the null hypothesis is true, then the null hypothesis is retained. This does not necessarily mean that the researcher accepts the null hypothesis as true—only that there is not currently enough evidence to conclude that it is true. Researchers often use the expression “fail to reject the null hypothesis” rather than “retain the null hypothesis,” but they never use the expression “accept the null hypothesis.”

The Misunderstood p Value

The p value is one of the most misunderstood quantities in psychological research (Cohen, 1994). Even professional researchers misinterpret it, and it is not unusual for such misinterpretations to appear in statistics textbooks!

The most common misinterpretation is that the p value is the probability that the null hypothesis is true—that the sample result occurred by chance. For example, a misguided researcher might say that because the p value is .02, there is only a 2% chance that the result is due to chance and a 98% chance that it reflects a real relationship in the population. But this is incorrect . The p value is really the probability of a result at least as extreme as the sample result if the null hypothesis were true. So a p value of .02 means that if the null hypothesis were true, a sample result this extreme would occur only 2% of the time.

You can avoid this misunderstanding by remembering that the p value is not the probability that any particular hypothesis is true or false. Instead, it is the probability of obtaining the sample result if the null hypothesis were true.

Role of Sample Size and Relationship Strength

Recall that null hypothesis testing involves answering the question, “If the null hypothesis were true, what is the probability of a sample result as extreme as this one?” In other words, “What is the p value?” It can be helpful to see that the answer to this question depends on just two considerations: the strength of the relationship and the size of the sample. Specifically, the stronger the sample relationship and the larger the sample, the less likely the result would be if the null hypothesis were true. That is, the lower the p value. This should make sense. Imagine a study in which a sample of 500 women is compared with a sample of 500 men in terms of some psychological characteristic, and Cohen’s d is a strong 0.50. If there were really no sex difference in the population, then a result this strong based on such a large sample should seem highly unlikely. Now imagine a similar study in which a sample of three women is compared with a sample of three men, and Cohen’s d is a weak 0.10. If there were no sex difference in the population, then a relationship this weak based on such a small sample should seem likely. And this is precisely why the null hypothesis would be rejected in the first example and retained in the second.

Of course, sometimes the result can be weak and the sample large, or the result can be strong and the sample small. In these cases, the two considerations trade off against each other so that a weak result can be statistically significant if the sample is large enough and a strong relationship can be statistically significant even if the sample is small. Table 13.1 “How Relationship Strength and Sample Size Combine to Determine Whether a Result Is Statistically Significant” shows roughly how relationship strength and sample size combine to determine whether a sample result is statistically significant. The columns of the table represent the three levels of relationship strength: weak, medium, and strong. The rows represent four sample sizes that can be considered small, medium, large, and extra large in the context of psychological research. Thus each cell in the table represents a combination of relationship strength and sample size. If a cell contains the word Yes , then this combination would be statistically significant for both Cohen’s d and Pearson’s r . If it contains the word No , then it would not be statistically significant for either. There is one cell where the decision for d and r would be different and another where it might be different depending on some additional considerations, which are discussed in Section 13.2 “Some Basic Null Hypothesis Tests”

Table 13.1 How Relationship Strength and Sample Size Combine to Determine Whether a Result Is Statistically Significant

| Relationship strength | |||

|---|---|---|---|

| Sample Size | Weak | Medium | Strong |

| Small ( = 20) | No | No | = Maybe = Yes |

| Medium ( = 50) | No | Yes | Yes |

| Large ( = 100) | = Yes = No | Yes | Yes |

| Extra large ( = 500) | Yes | Yes | Yes |

Although Table 13.1 “How Relationship Strength and Sample Size Combine to Determine Whether a Result Is Statistically Significant” provides only a rough guideline, it shows very clearly that weak relationships based on medium or small samples are never statistically significant and that strong relationships based on medium or larger samples are always statistically significant. If you keep this in mind, you will often know whether a result is statistically significant based on the descriptive statistics alone. It is extremely useful to be able to develop this kind of intuitive judgment. One reason is that it allows you to develop expectations about how your formal null hypothesis tests are going to come out, which in turn allows you to detect problems in your analyses. For example, if your sample relationship is strong and your sample is medium, then you would expect to reject the null hypothesis. If for some reason your formal null hypothesis test indicates otherwise, then you need to double-check your computations and interpretations. A second reason is that the ability to make this kind of intuitive judgment is an indication that you understand the basic logic of this approach in addition to being able to do the computations.

Statistical Significance Versus Practical Significance

Table 13.1 “How Relationship Strength and Sample Size Combine to Determine Whether a Result Is Statistically Significant” illustrates another extremely important point. A statistically significant result is not necessarily a strong one. Even a very weak result can be statistically significant if it is based on a large enough sample. This is closely related to Janet Shibley Hyde’s argument about sex differences (Hyde, 2007). The differences between women and men in mathematical problem solving and leadership ability are statistically significant. But the word significant can cause people to interpret these differences as strong and important—perhaps even important enough to influence the college courses they take or even who they vote for. As we have seen, however, these statistically significant differences are actually quite weak—perhaps even “trivial.”

This is why it is important to distinguish between the statistical significance of a result and the practical significance of that result. Practical significance refers to the importance or usefulness of the result in some real-world context. Many sex differences are statistically significant—and may even be interesting for purely scientific reasons—but they are not practically significant. In clinical practice, this same concept is often referred to as “clinical significance.” For example, a study on a new treatment for social phobia might show that it produces a statistically significant positive effect. Yet this effect still might not be strong enough to justify the time, effort, and other costs of putting it into practice—especially if easier and cheaper treatments that work almost as well already exist. Although statistically significant, this result would be said to lack practical or clinical significance.

Key Takeaways

- Null hypothesis testing is a formal approach to deciding whether a statistical relationship in a sample reflects a real relationship in the population or is just due to chance.

- The logic of null hypothesis testing involves assuming that the null hypothesis is true, finding how likely the sample result would be if this assumption were correct, and then making a decision. If the sample result would be unlikely if the null hypothesis were true, then it is rejected in favor of the alternative hypothesis. If it would not be unlikely, then the null hypothesis is retained.

- The probability of obtaining the sample result if the null hypothesis were true (the p value) is based on two considerations: relationship strength and sample size. Reasonable judgments about whether a sample relationship is statistically significant can often be made by quickly considering these two factors.

- Statistical significance is not the same as relationship strength or importance. Even weak relationships can be statistically significant if the sample size is large enough. It is important to consider relationship strength and the practical significance of a result in addition to its statistical significance.

- Discussion: Imagine a study showing that people who eat more broccoli tend to be happier. Explain for someone who knows nothing about statistics why the researchers would conduct a null hypothesis test.

Practice: Use Table 13.1 “How Relationship Strength and Sample Size Combine to Determine Whether a Result Is Statistically Significant” to decide whether each of the following results is statistically significant.

- The correlation between two variables is r = −.78 based on a sample size of 137.

- The mean score on a psychological characteristic for women is 25 ( SD = 5) and the mean score for men is 24 ( SD = 5). There were 12 women and 10 men in this study.

- In a memory experiment, the mean number of items recalled by the 40 participants in Condition A was 0.50 standard deviations greater than the mean number recalled by the 40 participants in Condition B.

- In another memory experiment, the mean scores for participants in Condition A and Condition B came out exactly the same!

- A student finds a correlation of r = .04 between the number of units the students in his research methods class are taking and the students’ level of stress.

Cohen, J. (1994). The world is round: p < .05. American Psychologist, 49 , 997–1003.

Hyde, J. S. (2007). New directions in the study of gender similarities and differences. Current Directions in Psychological Science , 16 , 259–263.

Research Methods in Psychology Copyright © 2016 by University of Minnesota is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

9.1 Null and Alternative Hypotheses

The actual test begins by considering two hypotheses . They are called the null hypothesis and the alternative hypothesis . These hypotheses contain opposing viewpoints.

H 0 , the — null hypothesis: a statement of no difference between sample means or proportions or no difference between a sample mean or proportion and a population mean or proportion. In other words, the difference equals 0.

H a —, the alternative hypothesis: a claim about the population that is contradictory to H 0 and what we conclude when we reject H 0 .

Since the null and alternative hypotheses are contradictory, you must examine evidence to decide if you have enough evidence to reject the null hypothesis or not. The evidence is in the form of sample data.

After you have determined which hypothesis the sample supports, you make a decision. There are two options for a decision. They are reject H 0 if the sample information favors the alternative hypothesis or do not reject H 0 or decline to reject H 0 if the sample information is insufficient to reject the null hypothesis.

Mathematical Symbols Used in H 0 and H a :

| equal (=) | not equal (≠) greater than (>) less than (<) |

| greater than or equal to (≥) | less than (<) |

| less than or equal to (≤) | more than (>) |

H 0 always has a symbol with an equal in it. H a never has a symbol with an equal in it. The choice of symbol depends on the wording of the hypothesis test. However, be aware that many researchers use = in the null hypothesis, even with > or < as the symbol in the alternative hypothesis. This practice is acceptable because we only make the decision to reject or not reject the null hypothesis.

Example 9.1

H 0 : No more than 30 percent of the registered voters in Santa Clara County voted in the primary election. p ≤ 30 H a : More than 30 percent of the registered voters in Santa Clara County voted in the primary election. p > 30

A medical trial is conducted to test whether or not a new medicine reduces cholesterol by 25 percent. State the null and alternative hypotheses.

Example 9.2

We want to test whether the mean GPA of students in American colleges is different from 2.0 (out of 4.0). The null and alternative hypotheses are the following: H 0 : μ = 2.0 H a : μ ≠ 2.0

We want to test whether the mean height of eighth graders is 66 inches. State the null and alternative hypotheses. Fill in the correct symbol (=, ≠, ≥, <, ≤, >) for the null and alternative hypotheses.

- H 0 : μ __ 66

- H a : μ __ 66

Example 9.3

We want to test if college students take fewer than five years to graduate from college, on the average. The null and alternative hypotheses are the following: H 0 : μ ≥ 5 H a : μ < 5

We want to test if it takes fewer than 45 minutes to teach a lesson plan. State the null and alternative hypotheses. Fill in the correct symbol ( =, ≠, ≥, <, ≤, >) for the null and alternative hypotheses.

- H 0 : μ __ 45

- H a : μ __ 45

Example 9.4

An article on school standards stated that about half of all students in France, Germany, and Israel take advanced placement exams and a third of the students pass. The same article stated that 6.6 percent of U.S. students take advanced placement exams and 4.4 percent pass. Test if the percentage of U.S. students who take advanced placement exams is more than 6.6 percent. State the null and alternative hypotheses. H 0 : p ≤ 0.066 H a : p > 0.066

On a state driver’s test, about 40 percent pass the test on the first try. We want to test if more than 40 percent pass on the first try. Fill in the correct symbol (=, ≠, ≥, <, ≤, >) for the null and alternative hypotheses.

- H 0 : p __ 0.40

- H a : p __ 0.40

Collaborative Exercise

Bring to class a newspaper, some news magazines, and some internet articles. In groups, find articles from which your group can write null and alternative hypotheses. Discuss your hypotheses with the rest of the class.

As an Amazon Associate we earn from qualifying purchases.

This book may not be used in the training of large language models or otherwise be ingested into large language models or generative AI offerings without OpenStax's permission.

Want to cite, share, or modify this book? This book uses the Creative Commons Attribution License and you must attribute Texas Education Agency (TEA). The original material is available at: https://www.texasgateway.org/book/tea-statistics . Changes were made to the original material, including updates to art, structure, and other content updates.

Access for free at https://openstax.org/books/statistics/pages/1-introduction

- Authors: Barbara Illowsky, Susan Dean

- Publisher/website: OpenStax

- Book title: Statistics

- Publication date: Mar 27, 2020

- Location: Houston, Texas

- Book URL: https://openstax.org/books/statistics/pages/1-introduction

- Section URL: https://openstax.org/books/statistics/pages/9-1-null-and-alternative-hypotheses

© Jan 23, 2024 Texas Education Agency (TEA). The OpenStax name, OpenStax logo, OpenStax book covers, OpenStax CNX name, and OpenStax CNX logo are not subject to the Creative Commons license and may not be reproduced without the prior and express written consent of Rice University.

- Skip to secondary menu

- Skip to main content

- Skip to primary sidebar

Statistics By Jim

Making statistics intuitive

Failing to Reject the Null Hypothesis

By Jim Frost 69 Comments

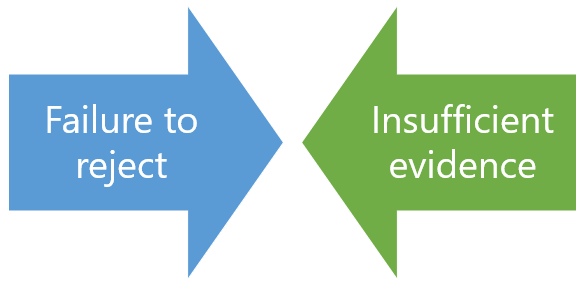

Failing to reject the null hypothesis is an odd way to state that the results of your hypothesis test are not statistically significant. Why the peculiar phrasing? “Fail to reject” sounds like one of those double negatives that writing classes taught you to avoid. What does it mean exactly? There’s an excellent reason for the odd wording!

In this post, learn what it means when you fail to reject the null hypothesis and why that’s the correct wording. While accepting the null hypothesis sounds more straightforward, it is not statistically correct!

Before proceeding, let’s recap some necessary information. In all statistical hypothesis tests, you have the following two hypotheses:

- The null hypothesis states that there is no effect or relationship between the variables.

- The alternative hypothesis states the effect or relationship exists.

We assume that the null hypothesis is correct until we have enough evidence to suggest otherwise.

After you perform a hypothesis test, there are only two possible outcomes.

- When your p-value is greater than your significance level, you fail to reject the null hypothesis. Your results are not significant. You’ll learn more about interpreting this outcome later in this post.

Related posts : Hypothesis Testing Overview and The Null Hypothesis

Why Don’t Statisticians Accept the Null Hypothesis?

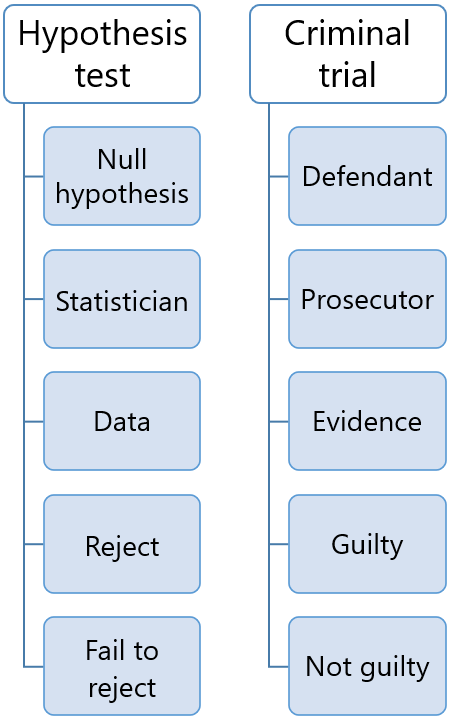

To understand why we don’t accept the null, consider the fact that you can’t prove a negative. A lack of evidence only means that you haven’t proven that something exists. It does not prove that something doesn’t exist. It might exist, but your study missed it. That’s a huge difference and it is the reason for the convoluted wording. Let’s look at several analogies.

Species Presumed to be Extinct

Lack of proof doesn’t represent proof that something doesn’t exist!

Criminal Trials

Perhaps the prosecutor conducted a shoddy investigation and missed clues? Or, the defendant successfully covered his tracks? Consequently, the verdict in these cases is “not guilty.” That judgment doesn’t say the defendant is proven innocent, just that there wasn’t enough evidence to move the jury from the default assumption of innocence.

Hypothesis Tests

The hypothesis test assesses the evidence in your sample. If your test fails to detect an effect, it’s not proof that the effect doesn’t exist. It just means your sample contained an insufficient amount of evidence to conclude that it exists. Like the species that were presumed extinct, or the prosecutor who missed clues, the effect might exist in the overall population but not in your particular sample. Consequently, the test results fail to reject the null hypothesis, which is analogous to a “not guilty” verdict in a trial. There just wasn’t enough evidence to move the hypothesis test from the default position that the null is true.

The critical point across these analogies is that a lack of evidence does not prove something does not exist—just that you didn’t find it in your specific investigation. Hence, you never accept the null hypothesis.

Related post : The Significance Level as an Evidentiary Standard

What Does Fail to Reject the Null Hypothesis Mean?

Accepting the null hypothesis would indicate that you’ve proven an effect doesn’t exist. As you’ve seen, that’s not the case at all. You can’t prove a negative! Instead, the strength of your evidence falls short of being able to reject the null. Consequently, we fail to reject it.

Failing to reject the null indicates that our sample did not provide sufficient evidence to conclude that the effect exists. However, at the same time, that lack of evidence doesn’t prove that the effect does not exist. Capturing all that information leads to the convoluted wording!

What are the possible implications of failing to reject the null hypothesis? Let’s work through them.

First, it is possible that the effect truly doesn’t exist in the population, which is why your hypothesis test didn’t detect it in the sample. Makes sense, right? While that is one possibility, it doesn’t end there.

Another possibility is that the effect exists in the population, but the test didn’t detect it for a variety of reasons. These reasons include the following:

- The sample size was too small to detect the effect.

- The variability in the data was too high. The effect exists, but the noise in your data swamped the signal (effect).

- By chance, you collected a fluky sample. When dealing with random samples, chance always plays a role in the results. The luck of the draw might have caused your sample not to reflect an effect that exists in the population.

Notice how studies that collect a small amount of data or low-quality data are likely to miss an effect that exists? These studies had inadequate statistical power to detect the effect. We certainly don’t want to take results from low-quality studies as proof that something doesn’t exist!

However, failing to detect an effect does not necessarily mean a study is low-quality. Random chance in the sampling process can work against even the best research projects!

If you’re learning about hypothesis testing and like the approach I use in my blog, check out my eBook!

Share this:

Reader Interactions

May 8, 2024 at 9:08 am

Thank you very much for explaining the topic. It brings clarity and makes statistics very simple and interesting. Its helping me in the field of Medical Research.

February 26, 2024 at 7:54 pm

Hi Jim, My question is that can I reverse Null hyposthesis and start with Null: µ1 ≠ µ2 ? Then, if I can reject Null, I will end up with µ1=µ2 for mean comparison and this what I am looking for. But isn’t this cheating?

February 26, 2024 at 11:41 pm

That can be done but it requires you to revamp the entire test. Keep in mind that the reason you normally start out with the null equating to no relationship is because the researchers typically want to prove that a relationship or effect exists. This format forces the researchers to collect a substantial amount of high quality data to have a chance at demonstrating that an effect exists. If they collect a small sample and/or poor quality (e.g., noisy or imprecise), then the results default back to the null stating that no effect exists. So, they have to collect good data and work hard to get findings that suggest the effect exists.

There are tests that flip it around as you suggest where the null states that a relationship does exist. For example, researchers perform an equivalency test when they want to show that there is no difference. That the groups are equal. The test is designed such that it requires a good sample size and high quality data to have a chance at proving equivalency. If they have a small sample size and/or poor quality data, the results default back to the groups being unequal, which is not what they want to show.

So, choose the null hypothesis and corresponding analysis based on what you hope to find. Choose the null hypothesis that forces you to work hard to reject it and get the results that you want. It forces you to collect better evidence to make your case and the results default back to what you don’t want if you do a poor job.

I hope that makes sense!

October 13, 2023 at 5:10 am

Really appreciate how you have been able to explain something difficult in very simple terms. Also covering why you can’t accept a null hypothesis – something which I think is frequently missed. Thank you, Jim.

February 22, 2022 at 11:18 am

Hi Jim, I really appreciate your blog, making difficult things sound simple is a great gift.

I have a doubt about the p-value. You said there are two options when it comes to hypothesis tests results . Reject or failing to reject the null, depending on the p-value and your significant level.

But… a P-value of 0,001 means a stronger evidence than a P-value of 0,01? ( both with a significant level of 5%. Or It doesn`t matter, and just every p-Value under your significant level means the same burden of evidence against the null?

I hope I made my point clear. Thanks a lot for your time.

February 23, 2022 at 9:06 pm

There are different schools of thought about this question. The traditional approach is clear cut. Your results are statistically significance when your p-value is less than or equal to your significance level. When the p-value is greater than the significance level, your results are not significant.

However, as you point out, lower p-values indicate stronger evidence against the null hypothesis. I write about this aspect of p-values in several articles, interpreting p-values (near the end) and p-values and reproducibility .

Personally, I consider both aspects. P-values near 0.05 provide weak evidence. Consequently, I’d be willing to say that p-values less than or equal to 0.05 are statistically significant, but when they’re near 0.05, I’d consider it as a preliminary result that requires more research. However, if the p-value is less 0.01, or even better 0.001, then that’s much stronger evidence and I’ll give those results more weight in my evaluation.

If you read those two articles, I think you’ll see what I mean.

January 1, 2022 at 6:00 pm

HI, I have a quick question that you may be able to help me with. I am using SPSS and carrying out a Mann W U Test it says to retain the null hypothesis. The hypothesis is that males are faster than women at completing a task. So is that saying that they are or are not

January 1, 2022 at 8:17 pm

In that case, your sample data provides insufficient evidence to conclude that males are faster. The results do not prove that males and females are the same speed. You just don’t have enough evidence to say males are faster. In this post, I cover the reasons why you can’t prove the null is true.

November 23, 2021 at 5:36 pm

What if I have to prove in my hypothesis that there shouldn’t be any affect of treatment on patients? Can I say that if my null hypothesis is accepted i have got my results (no effect)? I am confused what to do in this situation. As for null hypothesis we always have to write it with some type of equality. What if I want my result to be what i have stated in null hypothesis i.e. no effect? How to write statements in this case? I am using non parametric test, Mann whitney u test

November 27, 2021 at 4:56 pm

You need to perform an equivalence test, which is a special type of procedure when you want to prove that the results are equal. The problem with a regular hypothesis test is that when you fail to reject the null, you’re not proving that they the outcomes are equal. You can fail to reject the null thanks to a small sample size, noisy data, or a small effect size even when the outcomes are truly different at the population level. An equivalence test sets things up so you need strong evidence to really show that two outcomes are equal.

Unfortunately, I don’t have any content for equivalence testing at this point, but you can read an article about it at Wikipedia: Equivalence Test .

August 13, 2021 at 9:41 pm

Great explanation and great analogies! Thanks.

August 11, 2021 at 2:02 am

I got problems with analysis. I did wound healing experiments with drugs treatment (total 9 groups). When I do the 2-way ANOVA in excel, I got the significant results in sample (Drug Treatment) and columns (Day, Timeline) . But I did not get the significantly results in interactions. Can I still reject the null hypothesis and continue the post-hoc test?

Thank you very much.

June 13, 2021 at 4:51 am

Hi Jim, There are so many books covering maths/programming related to statistics/DS, but may be hardly any book to develop an intuitive understanding. Thanks to you for filling up that gap. After statistics, hypothesis-testing, regression, will it be possible for you to write such books on more topics in DS such as trees, deep-learning etc.

I recently started with reading your book on hypothesis testing (just finished the first chapter). I have a question w.r.t the fuel cost example (from first chapter), where a random sample of 25 families (with sample mean 330.6) is taken. To do the hypothesis testing here, we are taking a sampling distribution with a mean of 260. Then based on the p-value and significance level, we find whether to reject or accept the null hypothesis. The entire decision (to accept or reject the null hypothesis) is based on the sampling distribution about which i have the following questions : a) we are assuming that the sampling distribution is normally distributed. what if it has some other distribution, how can we find that ? b) We have assumed that the sampling distribution is normally distributed and then further assumed that its mean is 260 (as required for the hypothesis testing). But we need the standard deviation as well to define the normal distribution, can you please let me know how do we find the standard deviation for the sampling distribution ? Thanks.

April 24, 2021 at 2:25 pm

Maybe its the idea of “Innocent until proven guilty”? Your Null assume the person is not guilty, and your alternative assumes the person is guilty, only when you have enough evidence (finding statistical significance P0.05 you have failed to reject null hypothesis, null stands,implying the person is not guilty. Or, the person remain innocent.. Correct me if you think it’s wrong but this is the way I interpreted.

April 25, 2021 at 5:10 pm

I used the courtroom/trial analogy within this post. Read that for more details. I’d agree with your general take on the issue except when you have enough evidence you actually reject the null, which in the trial means the defendant is found guilty.

April 17, 2021 at 6:10 am

Can regression analysis be done using 5 companies variables for predicting working capital management and profitability positive/negative relationship?

Also, does null hypothesis rejecting means whatsoever is stated in null hypothesis that is false proved through regression analysis?

I have very less knowledge about regression analysis. Please help me, Sir. As I have my project report due on next week. Thanks in advance!

April 18, 2021 at 10:48 pm

Hi Ahmed, yes, regression analysis can be used for the scenario you describe as long as you have the required data.

For more about the null hypothesis in relation to regression analysis, read my post about regression coefficients and their p-values . I describe the null hypothesis in it.

January 26, 2021 at 7:32 pm

With regards to the legal example above. While your explanation makes sense when simplified to this statistical level, from a legal perspective it is not correct. The presumption of innocence means one does not need to be proven innocent. They are innocent. The onus of proof lies with proving they are guilty. So if you can’t prove someones guilt then in fact you must accept the null hypothesis that they are innocent. It’s not a statistical test so a little bit misleading using it an example, although I see why you would.